Jun 16, 2015

Okay, so the title. What's that about? I mean, good gravy, how are cosmic rays in anyway relevant to a blog about SQL Server? Srsly?

Have you ever heard of DBCC? No? Okay, it's a Transact-SQL statement. It stands for Database Console Command. Hands up all those who thought it stood for “Database Consistency Checker”. Okay, just a couple of you, pretty good. So, DBCC statements can be grouped into four categories: Maintenance, Miscellaneous, Informational and Validation.

Today, I'll be looking at a maintenance category statement, DBCC CHECKDB. Now, DBCC CHECKDB is a statement that will run a check on the logical and physical integrity of all the objects in the specified database by performing the following operations:

- DBCC CHECKALLOC on the database – checks the consistency of disk space allocation structures for a specified database.

- DBCC CHECKTABLE on every table and view in the database – checks the integrity of all the pages and structures that make up the table or indexed view.

- DBCC CHECKCATALOG on the database – checks for catalog consistency within the specified database.

- Validate the contents of every indexed view in the database.

- Validate link-level consistency between table metadata and file system directories and files when storing varbinary(max) data the file system using FILESTREAM.

- Validate the Service Broker data in the database.

Phew! That's quite a bit for only 12 keystrokes. As you can probably imagine, that's a particulary resource intensive process. Needless to say it can cause performance problems when it's run concurrently with a workload. Some people say, and I know I'm using a “Strawman argument,” that you should rely on the WITH CHECKSUM option when performing a full database backup instead. I beg to differ.

First, some background. SQL Server has some page protection options. I'd prefer to call them page corruption detection options. Mainly because they don't actually protect anything from being corrupted, they'll just let you know when they have been. A subtle difference, but significant none the less.

The default page protection option is the CHECKSUM, which enables page checksums, and is enabled on all new databases since SQL Server 2005 (unless someone turned it off in the model database, that is). This means that when a data file page is written to disk, usually by a CHECKPOINT operation, a simple checksum is calculated for the 8KB page contents and written into the page's header.

Alright, so when a data file page is read from disk into memory, the checksum for the page is recalculated and compared to the value in the page's header. If they don't match, SQL Server knows that something has gone wrong with the I/O subsystem and raises an 824 error.

Additionally, SQL Server will check the page's checksums during a backup if you've specified the WITH CHECKSUM option. If there's a problem here, the backup will fail and the corrupted page will be reported. So far, so good. But wait, there's more.

Hopefully, you can see the problem here. Page checksums detect page corruption caused by the I/O system. But what if the corruption hasn't been caused by the I/O subsystem?

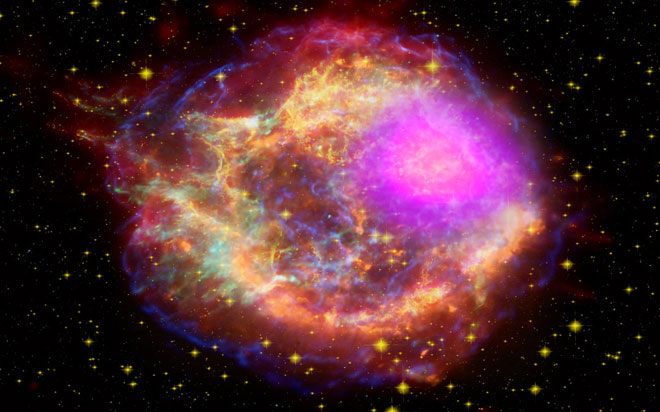

Consider this situation. SQL Server reads a data file into memory from disk. There is nothing wrong with the page at this stage and the checksums verify that. The page is modified via an update statement. Now, before the next checkpoint operation, a cosmic ray strikes a section of a RAM chip. It corrupts the 8KB block holding the modification. The checkpoint occurs, the checksum over the page is calculated and written to the header of the page when it is persisted to the disk.

The page is later read from disk to memory and the checksum is validated, no problems are detected. Only we know there is a problem. The page checksum algorithm is incapable of detecting this kind of error since it didn't occur as a result of a faulty write in the I/O subsystem. Similarly, a backup operation won't detect any problems either for precisely the reasons already stated. By design, page checksums can only detect corruption that occured in the I/O subsystem.

This is where our good friend DBCC CHECKDB comes in. It interprets the page contents by validating row structures, validating column values and checking linkages in indexes. It should always be able to detect a corrupt page, even if the corruption occured in memory.

My advice is this: Use the WITH CHECKSUM option when you're doing your full database backups by all means, but please include some provision for running a regular DBCC CHECKDB. That check doesn't need to be run on a production system either, you could always restore the backup on another server and run consistency checks on that server. Remember, page checksums are fallible.

See, cosmic rays are something we should all be concerned about. “See” you next time. Cheers.

How do your Excel skills stack up?

Test NowNext up:

- How to give a killer presentation or…not let your presentation kill you!

- For Each loops in Excel VBA

- Taking the first step towards power by rooting your mobile device

- Archiving old emails in Outlook

- SharePoint permissions on views using workflows

- The System Prism

- Reuse slides in PowerPoint

- Code sharing strategies in Windows Universal Apps

- Present a PowerPoint 2013 presentation online

- Poodle or terrier – are your systems safe?

Previously

- Quick ways to automate in Photoshop – Part 3: Batch processing

- New features of Microsoft CRM 2013

- Customising the Project 2013 interface

- Different communication styles – the best communicators know this, so should you (Part 2)

- Managing mobile devices with Windows Intune and SCCM 2012 R2

- Recording screen action in PowerPoint 2013

- A fantastic add-in for OneNote 2013

- Taking a closer look at Universal Apps

- Who does what in managing change?

- Creating Quick Parts in Microsoft Word